Data Privacy

Best Practices for Data Privacy in Coaching Platforms

February 23, 2026

24 min read

Best Practices for Data Privacy in Coaching Platforms

Protecting sensitive data on coaching platforms is essential for building trust and ensuring compliance with privacy laws like GDPR and CCPA. Here's how platforms can safeguard user information effectively:

- Embed privacy into design: Integrate data protection measures from the start, following principles like limiting data collection and ensuring transparency.

- Use encryption: Secure stored and transmitted data with strong encryption methods like AES-256 and TLS 1.3 to prevent unauthorized access.

- Control access: Implement Role-Based Access Controls (RBAC) to limit data access based on user roles, reducing exposure risks.

- Obtain clear consent: Use opt-in mechanisms for data collection, and ensure users can easily modify or withdraw consent.

- Automate retention policies: Delete or anonymize data when it's no longer needed, reducing risks and meeting legal requirements.

- AI safeguards: Protect data used in AI systems with techniques like differential privacy and continuous monitoring.

- Transparency: Clearly communicate data handling policies, including who can access what and why, to maintain user trust.

1. Privacy-by-Design Principles

Privacy-by-Design focuses on embedding data protection into a platform’s core structure. This method involves identifying and addressing privacy risks during the design stage, rather than waiting for vulnerabilities to arise later. For example, under regulations like UK GDPR Article 25, businesses must integrate privacy considerations into every part of their processing activities from the very beginning.

Shane Tierney, Senior Program Manager at Drata, highlights the philosophy behind this approach:

"Privacy by Design tries to set out these tent poles and ethos of how to actually approach any given project or initiative that a company would take on. It tries to be almost project agnostic."

The Privacy-by-Design framework revolves around seven key principles:

- Proactive, not reactive: Identify and address privacy risks early.

- Privacy as the default setting: Ensure users are automatically protected without requiring extra steps.

- Privacy embedded into design: Build privacy into the platform’s architecture from the start.

- Full functionality: Maintain performance while safeguarding privacy.

- End-to-end security: Protect data throughout its entire lifecycle, from collection to deletion.

- Visibility and transparency: Clearly communicate how data is handled.

- Respect for user privacy: Prioritize individual privacy rights with strong default settings.

For coaching platforms that handle sensitive topics like mental health, finances, or personal relationships, these principles are essential for fostering trust. They also guide practices such as collecting only the data that’s absolutely necessary.

Collecting Only Necessary Data

Data minimization is about collecting only what’s essential for the coaching relationship. Gathering unnecessary information - like asking for extensive personal details that are never used - not only complicates processes but also increases risks, as every extra data point becomes a potential vulnerability in a breach.

To address this, coaching platforms should review every instance where they collect data, including discovery call forms, intake questionnaires, session notes, and payment systems. For each piece of information, they should ask: “Is this data truly necessary for achieving the coaching goal?” For instance, if a platform collects birth dates for sending birthday messages, it might be enough to ask for just the month and day rather than the full date. Similarly, location data should only be requested when it’s directly needed, such as for matching clients with nearby coaches, rather than during the initial sign-up process.

Under GDPR, platforms must define a clear legal basis for each piece of data they collect. This requirement helps ensure that only essential information - like what’s truly needed to deliver a service - is gathered, reducing unnecessary complexity and liability while improving the user experience.

Anonymization and Pseudonymization

In addition to limiting data collection, platforms must protect the data they do handle using techniques like anonymization and pseudonymization.

- Anonymization: This process removes all identifying details so thoroughly that the data can no longer be linked to an individual. Once anonymized, the data is no longer classified as "personal data" under GDPR, meaning the regulation no longer applies. This method is ideal for generating aggregate statistics, such as overall client satisfaction scores or common topics, without exposing personal information.

- Pseudonymization: Here, identifiable information (like names or email addresses) is replaced with artificial labels, while the key to reverse the process is stored securely and separately. This allows platforms to analyze trends and track progress over time while keeping user identities protected. However, since pseudonymized data can still be traced back to individuals, GDPR regulations continue to apply.

| Technique | Reversibility | Data Status | Best Use Case |

|---|---|---|---|

| Anonymization | Irreversible | No longer personal data | Public reports, aggregate analytics, testing datasets |

| Pseudonymization | Reversible with key | Still personal data | Ongoing analysis, progress tracking, internal operations |

For coaching platforms, pseudonymization is especially useful when processing session transcripts for quality reviews or AI training. Analysts can examine patterns in conversations without knowing which client said what. Meanwhile, sensitive details like names, contact information, and payment data should remain secured in a separate, highly protected database. This separation makes it harder for unauthorized users to link session data to specific individuals, reducing risk. For platforms dealing with deeply personal topics, these measures are essential for maintaining user trust.

sbb-itb-3c06305

2. Clear Data Handling Policies

Privacy-by-design is a great starting point, but it’s only part of the picture. Clear data handling policies are equally important - they help users and administrators understand how data is managed. Even if privacy features are built-in, users need to know exactly what happens with their data. This is especially true in coaching, where trust is the foundation of the relationship. Without transparency, users may hesitate to share sensitive information.

Open Communication About Data Practices

A strong policy breaks data handling into categories that are easy to understand. For example, platforms can label data as Account Data, Usage Data, or Coaching Data, with clear explanations for each. Take IP addresses - they might be used to identify the nearest server for better video quality during coaching sessions. Simple, clear explanations like this make a big difference.

Using a "Who sees what, and why" approach also helps. For instance, coach matching preferences might be visible to the team handling pairings, but session transcripts stay private and are never shared with employers. These boundaries give users confidence about what’s shared and what’s not.

"The expectation of privacy in a coaching relationship is essential. It is vital to the foundation of trust between coach and client that is so often necessary to do meaningful work." – CoachAccountable

For workplace coaching platforms, it’s critical to outline what sponsoring employers can access. For example, employers might see attendance statistics in aggregate form but won’t have access to session notes or private messages. Some platforms even go a step further, sharing anonymized themes from AI conversations only when a minimum number of users participate. This ensures individual privacy while still offering useful insights for organizations.

Transparency also extends to third-party providers. Platforms should maintain a public list of subprocessors, like cloud hosting or payment services, and clearly define what access these providers have. Some platforms even offer subscription updates to notify users of changes to the subprocessor list, reinforcing their commitment to openness.

Next, let’s consider how platforms can prevent hidden data practices that erode trust.

Preventing Hidden Data Collection

Hidden or unauthorized data collection is a major trust-breaker. Regulations like GDPR and CCPA require platforms to get explicit consent before collecting or processing personal data. Data practices must align with users’ expectations and serve their best interests.

One example comes from March 2025, when an AI sales coaching platform introduced a strict policy banning the sale of client data and prohibiting hidden data collection. This policy, rooted in GDPR principles, ensures that administrators only see anonymized employee progress scores. Such transparency reassures users that their data is safe.

"Transparency and consent are foundational to gaining employee trust in AI-driven coaching programs." – Pandatron

Platforms should also request permissions - like access to the camera, microphone, or location - only when those features are actively used. For instance, privacy notices might explain that location data is gathered through IP addresses solely to connect users to the nearest server for better video quality. Explicit permissions are required for using the camera or microphone, ensuring users are fully informed.

Generative AI introduces another layer of complexity. When sensitive information is uploaded without proper safeguards, it can lead to serious risks. According to Verizon’s 2025 Data Breach Investigations Report, 14% of employees regularly use generative AI tools on work devices, often through unsanctioned logins. This creates compliance and security vulnerabilities. Coaching platforms must clearly state whether AI conversations are recorded, how data is stored, and whether users can delete AI-generated content. These steps build the trust needed for digital coaching to thrive.

3. Encryption Standards

Strong encryption is the backbone of data security. Without it, even the most detailed data handling policies fall short, leaving information exposed. For coaching platforms that deal with sensitive conversations, financial transactions, and personal goals, encryption isn’t just important - it’s non-negotiable. Even in the event of a breach, encrypted data is essentially useless to attackers.

Encrypting Stored Data

Data stored in databases is a prime target for unauthorized access, making encryption a critical safeguard. The go-to standard for this purpose is AES-256 (Advanced Encryption Standard with a 256-bit key). Its strength lies in its sheer complexity - there are approximately 115 quattuorvigintillion possible keys (that’s a 115 followed by 78 zeros!). Brute-force attacks simply don’t stand a chance.

However, the algorithm itself isn’t the only thing that matters - how it’s implemented is equally crucial. Shockingly, over 70% of encryption vulnerabilities stem from implementation errors rather than flaws in the cryptography itself. One effective approach is envelope encryption. Here’s how it works:

- A Data Encryption Key (DEK) encrypts the actual data.

- A separate Key Encryption Key (KEK) encrypts the DEK.

- The KEK is securely stored in a dedicated Key Management System (KMS) or Hardware Security Module (HSM), while the encrypted data resides in the database.

"The right encryption implementation for your specific needs can protect against data breaches, ensure regulatory compliance, and provide critical security assurances to customers and stakeholders." – Christopher Porter, Training Camp

Another best practice is using authenticated encryption modes, such as GCM (Galois/Counter Mode), with block ciphers like AES. These modes ensure both confidentiality and data integrity, making it impossible for attackers to tamper with encrypted data undetected. Additionally, encryption keys should be rotated regularly. Automated systems can generate new keys and re-encrypt data periodically, ensuring keys don’t outlive their usefulness.

While this secures stored data, protecting information as it moves across networks is just as important.

Encrypting Data in Transit

When data travels between a user’s device and the platform, it’s at risk of interception. That’s where TLS 1.3 (Transport Layer Security) comes in. As the latest standard, TLS 1.3 is both faster and more secure than its predecessors. It eliminates outdated features that once created vulnerabilities, making it the clear choice for modern platforms. To comply with security standards, platforms must disable earlier TLS versions. SSL and early TLS versions (1.0 and 1.1) are obsolete and no longer allowed. In fact, AWS has announced it will officially deprecate TLS versions earlier than 1.2 by February 2024.

Additional safeguards include:

- HSTS (HTTP Strict Transport Security): Enforces HTTPS, ensuring all communication occurs over secure channels.

- Session cookies marked as "Secure": Prevent data from being transmitted over unencrypted connections.

- Disabling TLS compression: Protects against CRIME attacks, which exploit compression to extract sensitive data.

For coaching platforms, these measures apply to every interaction - whether it’s video calls, messaging, or file sharing. TLS not only keeps data confidential but also ensures its integrity and authenticity. It allows users to confirm they’re communicating with the legitimate platform, not an imposter. This trifecta of confidentiality, integrity, and authentication is what makes secure communication possible, fostering trust in the coaching relationship.

4. Role-Based Access Controls

Even with top-notch encryption, managing who can access data is just as important for creating a strong data privacy framework on coaching platforms. Role-Based Access Controls (RBAC) ensure that users only access the information they need for their specific roles. This approach follows the principle of least privilege, limiting exposure to only what's necessary. According to the OWASP 2021 Top 10, Broken Access Control is the leading web security vulnerability and continues to be a major concern in actual security incidents.

Limiting Access to Only What’s Necessary

RBAC operates on a "deny by default" principle. Access to resources is granted only when explicitly allowed by specific rules. This reduces the chances of accidental logic errors that could leak sensitive data. For instance, a coach would only access their own clients’ data, while an administrator could view billing records but not private session details.

"Authorization may be defined as 'the process of verifying that a requested action or service is approved for a specific entity'" – NIST

To ensure security, platforms must validate user permissions for every request - whether it’s an AJAX call, file download, or database query - and these checks must happen on the server side. Additionally, sensitive database IDs should never appear in URLs. Instead, using session-specific indirect references can prevent Insecure Direct Object Reference (IDOR) attacks. Static resources like session recordings, PDF assessments, or uploaded files should also be secured to avoid exposure through publicly accessible cloud storage. These measures create a solid foundation for conducting regular access reviews.

Regular Access Reviews

Setting access controls isn’t a one-and-done task. Over time, users can accumulate unnecessary permissions, a situation known as privilege creep, which increases the likelihood of internal misuse. As OWASP notes:

"It is easier to grant users additional permissions rather than to take away some they previously enjoyed." – OWASP

To combat privilege creep, quarterly audits are recommended to review user permissions across platforms. These audits become especially important as coaching teams grow, adding roles like virtual assistants, co-coaches, or external contractors. With small service businesses experiencing an 18% annual increase in data breaches as of 2026, regular audits are a crucial step in staying secure.

Detailed logs of data access - tracking who accessed what and when - are invaluable for spotting suspicious activity and resolving issues. Automated logging can also make it easier to comply with regulatory requirements.

For roles with access to highly sensitive data, adding Multi-Factor Authentication (MFA) or advanced security protocols like FIDO2 provides an additional layer of protection.

5. User Consent and Transparency

Giving users control over their data starts with strict access controls, but it doesn’t stop there. Explicit user consent plays a crucial role in empowering individuals and reinforcing trust. Beyond being a legal requirement under regulations like GDPR and CCPA, consent reflects the ethical foundation of any coaching relationship. When platforms handle sensitive data - like health details, financial records, or session notes - users need to clearly understand what they’re agreeing to and who will access their information.

Consent shouldn’t feel like a box-ticking exercise. Overly complex, jargon-heavy policies can lead to what experts call "illusory control", where users click "accept" without fully understanding the implications. The Office of the Privacy Commissioner of Canada highlights the importance of meaningful consent:

"Consent should remain central, but it is necessary to breathe life into the ways in which it is obtained."

Users must also have the flexibility to withdraw or modify their consent as easily as they gave it.

Writing Understandable Privacy Policies

A well-written privacy policy is the foundation of informed consent. According to UK GDPR Article 7, consent requests must be clear, accessible, and written in plain language. Here’s what that looks like in practice:

- Use straightforward language. For example, instead of saying, "We may use data for research purposes", say, "We analyze how you use our website to improve its design."

- Write in the active voice and break down information into bullet points. Clearly outline what data is collected, its purpose, who it’s shared with, and any potential risks.

- Take a layered approach. Present the most critical details upfront while linking to more detailed sections for users who want further information.

Platforms should also name their organization, representative, and Data Protection Officer (DPO) in the policy, along with any third-party processors that rely on user consent. For instance, a coaching platform recently updated its privacy policy to include OAuth workflows for syncing with external calendars like Google, iCloud, or Office365. This method allows the platform to manage calendar events without storing users’ login credentials.

The stakes for non-compliance are steep. GDPR violations can lead to fines of up to 4% of global revenue or €20 million, while UK GDPR penalties can reach £17.5 million or 4% of annual worldwide turnover.

Once policies are in place, platforms should implement opt-in mechanisms that actively involve users in their data decisions.

Opt-In Data Collection

Consent must be deliberate and unambiguous. Silence, inactivity, or pre-checked boxes don’t count. As the Information Commissioner’s Office (ICO) explains:

"Silence or inactivity doesn't qualify. So, you cannot assume that people who don't engage with your consent mechanism have agreed to the storage and access technologies you want to use."

Instead of requiring users to opt out, platforms should adopt opt-in systems for non-essential purposes like marketing or third-party data sharing. This means offering granular options - separate checkboxes for things like analytics, promotional emails, or session recordings - each with clear "yes" or "no" choices. Also, ensure a "Reject All" option is as visible and accessible as "Accept All".

Feature-specific consent is another effective strategy. For example, ask for permission just before a user interacts with a specific feature, like starting a chatbot or watching an embedded video. This approach provides timely explanations of what data will be collected and why.

Equally important is the ability to withdraw consent. The ICO stresses:

"You must ensure that any consent mechanism has the technical capability to allow users to withdraw their consent with the same ease that they gave it."

Platforms can achieve this by including a persistent settings icon or a profile management link, enabling users to update their preferences with a single click. The ICO also suggests requesting fresh consent for data storage and access technologies every six months.

To enhance transparency further, some platforms adopt Responsible Vulnerability Disclosure (RVD) policies. These policies outline how security experts can report vulnerabilities, define the scope of digital resources, and set acknowledgment timeframes. Additionally, maintaining a publicly accessible list of subprocessors allows users to monitor which third-party providers handle their data, strengthening trust and accountability.

6. Data Retention and Deletion Policies

Collecting user consent is just the beginning; platforms also need to ensure data is deleted when it's no longer needed. Like encryption and access controls, effective data retention practices are a key part of a platform's privacy framework. Both GDPR and UK GDPR mandate that personal data can only be stored in an identifiable form for as long as it serves its original purpose. The Information Commissioner's Office (ICO) emphasizes this point:

"You must not hold personal information indefinitely, 'just in case' it might be useful in the future."

Beyond meeting legal requirements, deleting unnecessary data reduces risk by removing potential targets for breaches. Organizations that neglect these principles can face hefty penalties. With data breaches in small service businesses increasing by 18% year-over-year as of 2026, clearing out outdated data has become a crucial defense strategy. This combination of regulatory requirements and risk management underscores the importance of clear retention policies and automated systems.

Matching Retention to Purpose

Not all data should be treated the same - different types of information require tailored retention periods. For example, financial records often need to be kept for statutory periods, while session notes might only be necessary during the course of a coaching relationship. In healthcare, HIPAA regulations require patient-related data to be retained for at least six years from its creation or last use.

To manage this effectively, categorize data - such as session notes, recordings, payment histories, or marketing emails - and assign retention timelines that align with legal obligations and business needs. Once data storage costs outweigh its benefits, it should be deleted. Additionally, platforms must implement "legal hold" procedures to temporarily pause deletions when data is required for litigation or investigations.

Automated Deletion Systems

Manual deletion processes are prone to mistakes and delays, making automation a necessity. Automated systems can tag records with retention dates and ensure timely deletion. The ICO advises using built-in retention features to streamline this process:

"Use built-in system retention periods to purge electronic records and emails automatically once the retention period has expired."

Even with automation, regular audits - ideally conducted quarterly - are essential to identify files that may have bypassed automated workflows, such as those stored in shared folders or personal drives. In cases where permanent deletion isn't technically feasible, automated systems should render the data inaccessible by restricting access and securely archiving it. For platforms relying on third-party services for data disposal, obtaining electronic confirmations or destruction certificates is a good practice to ensure compliance.

7. Securing AI and Monitoring Systems

As coaching platforms continue to integrate AI for tasks like matching algorithms, personalized recommendations, and session insights, safeguarding the sensitive data these systems handle becomes a top priority. Unlike traditional software, AI models can unintentionally retain fragments of their training data, which raises the risk of data exposure. The OWASP AI Exchange offers over 200 pages of practical advice and references to help secure AI systems effectively.

Privacy-Preserving AI Techniques

Differential privacy has emerged as the standard for maintaining individual privacy in AI systems. As researcher Natalia Ponomareva and her team explain:

"Differential Privacy (DP) has become a gold standard for making formal statements about data anonymization".

Rather than relying on traditional anonymization methods, differential privacy introduces precise, measurable safeguards. By adding carefully calibrated noise to datasets or model outputs, it ensures that no single individual's data disproportionately influences the results.

For coaching platforms, this approach allows AI systems to identify patterns - like which coaching methods are most effective for specific goals - without compromising sensitive details such as session notes or personal information. To guide organizations in assessing their privacy measures, the National Institute of Standards and Technology (NIST) provides a "differential privacy pyramid" framework. Beyond differential privacy, privacy-by-design principles emphasize embedding privacy protections throughout the AI lifecycle, from initial development to deployment and maintenance. This includes practices like collecting only the minimum data required for the system’s purpose and encrypting all data used during training or operation.

Monitoring for Security Threats

While privacy measures protect data at its core, continuous monitoring is essential for identifying and addressing potential threats as they arise. Static security protocols alone are insufficient - AI systems demand ongoing oversight. As security experts at RAND highlight:

"AI security is not achieved through a one-time checklist - it is an ongoing, risk-based, multilayered process".

Effective monitoring involves tracking the integrity of data pipelines and observing model behavior for anomalies. This includes detecting unusual access patterns, unauthorized login attempts, and irregular data activity that could indicate breaches or tampering. Logging API calls and data transformations further ensures that any unauthorized changes can be traced back to specific users or timestamps. Automated alert systems play a critical role, notifying security teams of suspicious activity in real time so they can respond quickly. Additionally, regular audits are vital. Certain threats, like data poisoning - where malicious actors introduce harmful data into training sets - often require human analysis to uncover.

These AI-specific security practices work in tandem with broader platform protections, ensuring a robust defense across all components of the system.

8. User Control Over Personal Data

Giving users direct control over their personal data isn't just a good practice - it’s a legal obligation under most privacy regulations. For coaching platforms, which often deal with sensitive conversations and personal goals, this level of control becomes even more essential to maintain user trust.

Providing Data Access

Users should be able to access their data quickly and without unnecessary hurdles. According to the Information Commissioner's Office (ICO):

"You should consider how to help people exercise their rights directly through your service".

This can be achieved by offering a secure online portal or dashboard where users can view, download, and update their information at any time.

The accessible data should include both user-provided information and system-generated data. For platforms that support data portability, these exports should be in structured, machine-readable formats such as CSV or JSON. This level of access naturally extends to simple and clear processes for data deletion.

Data Deletion Processes

Making data deletion as simple as data access is a cornerstone of transparent data handling. As the ICO highlights:

"It shall be as easy to withdraw as to give consent".

For example, if a user signed up via an online form, they should be able to delete their account through an equally straightforward online process - ideally with just one click.

Platforms should allow users to delete an entire account or selectively remove specific data, such as session records or conversation histories. Features that handle sensitive inputs should include immediate delete options. Additionally, platforms must clarify whether deletion applies only to active databases or extends to backups as well.

Automating the deletion process based on clear retention schedules is another effective strategy. Once a coaching relationship ends or the data's purpose is fulfilled, the system should automatically delete or anonymize the information. This not only reduces security risks but also demonstrates respect for user privacy while easing administrative tasks.

| User Right | Platform Implementation Best Practice |

|---|---|

| Right of Access | Provide a secure portal for users to view and download personal data and tool outputs. |

| Right to Rectification | Offer a "profile settings" area where users can update their information. |

| Right to Erasure | Create a simple, one-step process for deleting accounts or specific data sets. |

| Right to Object | Include clear opt-out options, like privacy dashboards or unsubscribe links. |

| Data Portability | Ensure data exports are available in machine-readable formats, such as CSV or JSON. |

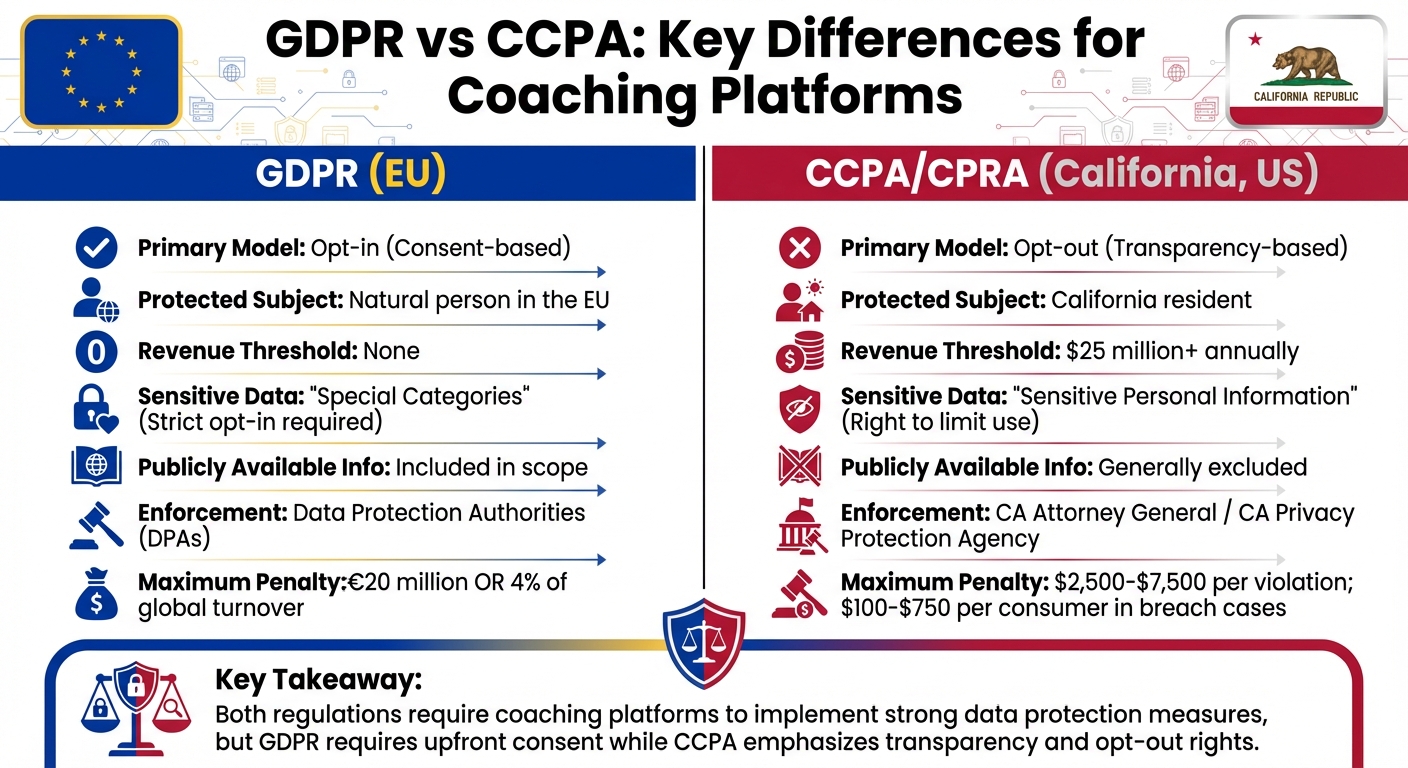

Key Regulations for Coaching Platforms

GDPR vs CCPA Privacy Regulations Comparison for Coaching Platforms

Coaching platforms must navigate a complex web of privacy laws worldwide, making it crucial to understand key frameworks to avoid penalties and maintain user trust. Two of the most impactful regulations are the General Data Protection Regulation (GDPR) in the European Union and the California Consumer Privacy Act (CCPA) in the United States.

The GDPR applies to any platform handling the data of individuals in the EU, regardless of the platform's physical location. For example, a U.S.-based coaching service working with European clients must comply with GDPR. This regulation requires platforms to have a clear legal basis for processing data, such as obtaining explicit consent or demonstrating contractual necessity for coaching services. Additionally, GDPR’s Article 9 imposes stricter rules for sensitive data, like health or mental health details, often requiring explicit opt-in consent.

The CCPA, on the other hand, governs for-profit businesses operating in California that meet specific thresholds, such as earning $25 million or more annually or processing the data of at least 100,000 consumers. Unlike GDPR, CCPA emphasizes transparency and opt-out rights rather than requiring upfront consent. Platforms must provide users with options like a "Do Not Sell My Personal Information" link, allowing them to opt out of data sales or sharing. The California Privacy Rights Act (CPRA), which amends CCPA, also introduces protections for "sensitive personal information", giving users the ability to limit how such data is used.

Penalties for non-compliance vary significantly. GDPR violations can lead to fines of up to €20 million or 4% of global turnover, while CCPA fines range from $2,500 to $7,500 per violation. In cases of data breaches, affected consumers may claim statutory damages ranging from $100 to $750 per incident. As Beatriz Peon from OneTrust aptly puts it:

"Privacy regulation is changing how organizations are measured, not just how they comply".

Comparing Major Privacy Regulations

The table below highlights the key differences between GDPR and CCPA/CPRA, helping coaching platforms determine which rules apply to their operations:

| Feature | GDPR (EU) | CCPA / CPRA (California, US) |

|---|---|---|

| Primary Model | Opt-in (Consent-based) | Opt-out (Transparency-based) |

| Protected Subject | Natural person in the EU | California resident |

| Revenue Threshold | None | $25 million+ |

| Sensitive Data | "Special Categories" (Strict opt-in) | "Sensitive Personal Information" (Right to limit use) |

| Publicly Available Info | Included in scope | Generally excluded |

| Enforcement | Data Protection Authorities (DPAs) | CA Attorney General / CA Privacy Protection Agency |

The Evolving Privacy Landscape

Privacy regulations are rapidly evolving beyond GDPR and CCPA. By 2026, states like New Jersey, Tennessee, and Minnesota will have enacted their own comprehensive privacy laws, requiring platforms to adapt their governance models. Globally, Vietnam’s personal data protection law took effect on January 1, 2026. Additionally, for platforms using AI in profiling, the EU AI Act will introduce phased requirements for high-risk systems through 2026 and 2027.

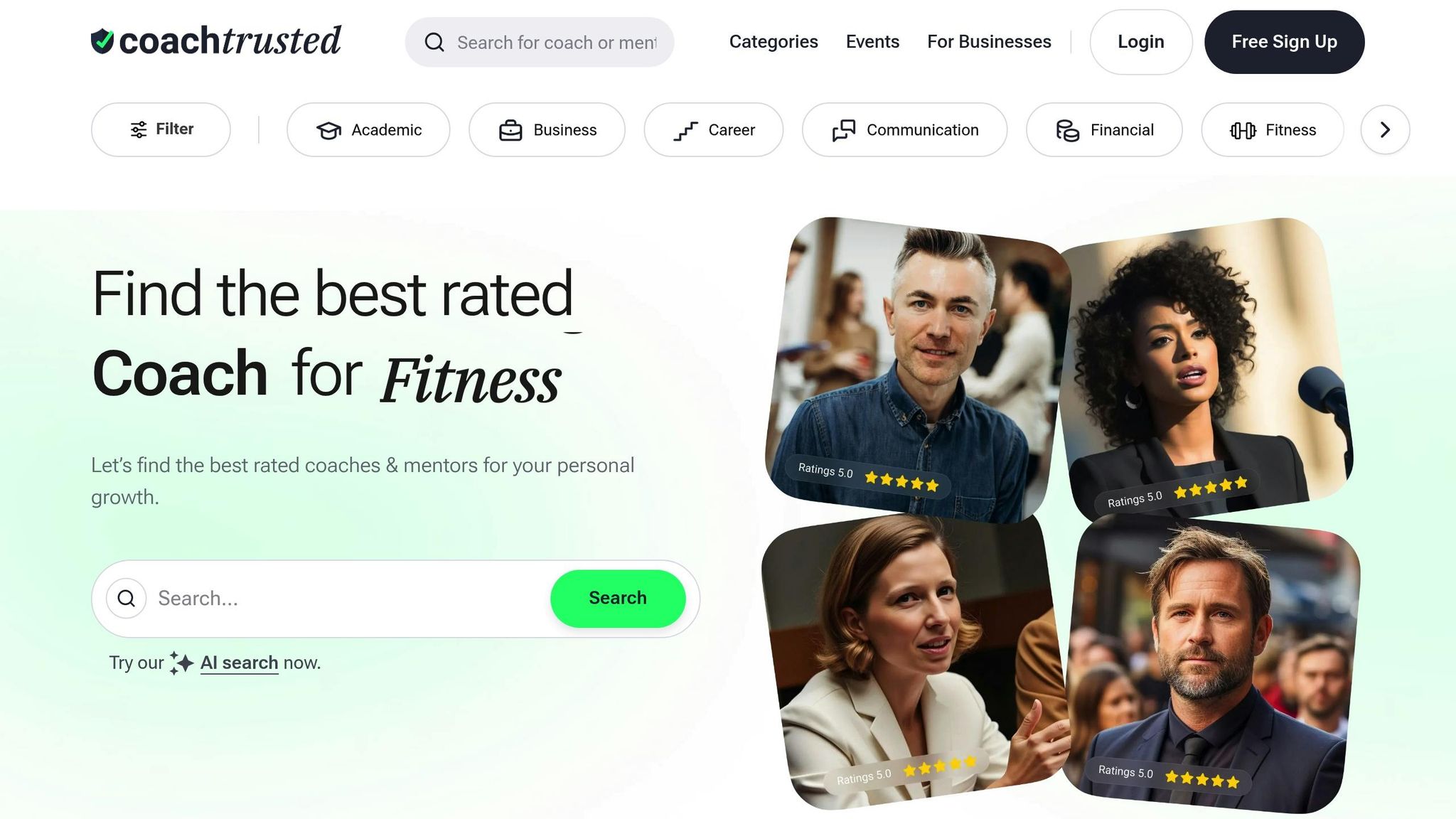

For U.S.-based platforms handling EU data, the Data Privacy Framework (DPF) offers a pathway for legal data transfers by self-certifying with the Department of Commerce. Furthermore, the EU has extended the UK’s adequacy decision through December 2031. These developments highlight the need for coaching platforms to stay vigilant and continuously refine their data privacy practices, as seen with platforms like Coachtrusted.

Implementing Best Practices on Platforms like Coachtrusted

Coachtrusted takes a thorough approach to safeguarding both coach and client data. By applying privacy principles, the platform ensures a balance between making coach profiles visible and protecting sensitive information.

Protecting Verified Coach Profiles

Coachtrusted ensures trust and confidentiality by implementing a two-layer system for coach data. Public profiles display essential information like bios, specialties, and credentials, while sensitive documents - such as IDs, certificates, and background checks - are encrypted and stored in a secure, isolated environment. To comply with GDPR Article 5(f), role-based access controls are used to restrict access to these sensitive documents, limiting it exclusively to the Verification Team.

To further protect accounts, the platform enforces multi-factor authentication (MFA), such as mobile app codes, to prevent unauthorized access even if login credentials are compromised. For coaches accessing the platform via mobile devices, features like remote wiping and encrypted local storage add an extra layer of security in case a device is lost or stolen.

Securing Platform Communication

Coachtrusted prioritizes secure communication by implementing end-to-end encryption (E2EE) for messages and video calls, ensuring that only the intended participants can access the content. As Studyraid highlights:

"Privacy is not just a compliance requirement; it's a fundamental aspect of maintaining trust in the coaching relationship"

Additional measures include password protection, waiting rooms, and host-controlled admissions for video sessions. For email transmissions and client portals, Transport Layer Security (TLS) is used to secure data. Before recording any sessions, explicit written consent is required, along with clear communication about who will have access to the recordings.

To maintain a high level of security, Coachtrusted conducts regular audits, vulnerability scans, and keeps detailed audit logs to identify and address any suspicious activity promptly. Automated data retention policies ensure that information is deleted for coaches who leave the platform, seamlessly integrating these practices into the platform’s overall security framework.

Conclusion

Protecting data privacy on coaching platforms goes far beyond meeting legal requirements - it’s the cornerstone of the trust needed for meaningful coaching relationships. When clients know their sensitive information is safe, they’re more willing to open up about the real obstacles they face, whether it’s financial struggles or personal health issues.

The strategies outlined here - like integrating privacy from the start, using strong encryption, implementing role-based access, and being upfront about AI usage - create a secure environment where both coaches and clients can focus on achieving results. Platforms that prioritize these measures don’t just comply with regulations like GDPR or CCPA; they turn privacy into a competitive advantage, encouraging loyalty and word-of-mouth growth.

For example, platforms such as Coachtrusted take this seriously by verifying coach credentials, securing communication with end-to-end encryption, and performing regular security audits to find and fix weak spots. These ongoing efforts ensure a safer digital coaching experience for everyone involved.

As the coaching industry evolves, privacy is becoming a core value rather than an afterthought. Businesses are demanding tighter data protections, and clients are gravitating toward platforms that provide real security. By adopting privacy practices that focus on transparency, encryption, and giving users control, coaching platforms can not only protect their users but also build stronger, long-lasting relationships that drive their success forward.

FAQs

What data should a coaching platform avoid collecting?

A coaching platform should steer clear of gathering personal data that isn't essential for the coaching process. This includes sensitive health information, financial details, or any unrelated personal details. By keeping data collection to only what's necessary, the platform minimizes privacy risks and builds stronger trust with its users.

How can I tell who can see my coaching notes and messages?

Accessing your coaching notes and messages depends on the privacy settings and user roles defined by the platform, such as coaches, clients, or administrators. To understand who can see your data and the extent of their access, take a close look at the platform's privacy policy. Staying informed about these settings is key to keeping your information secure.

Will AI features use or store my session data?

AI tools often use session data to provide tailored coaching experiences. However, this data is typically not stored or used for training purposes unless clearly specified. Privacy measures differ across platforms, with many implementing encryption and offering users options to manage their data.